Amazon Rufus Doesn’t Care About Your Keyword Rank

Only 22% of Amazon’s top search results overlap with Rufus recommendations. Here’s what that gap costs you, and how to close it.

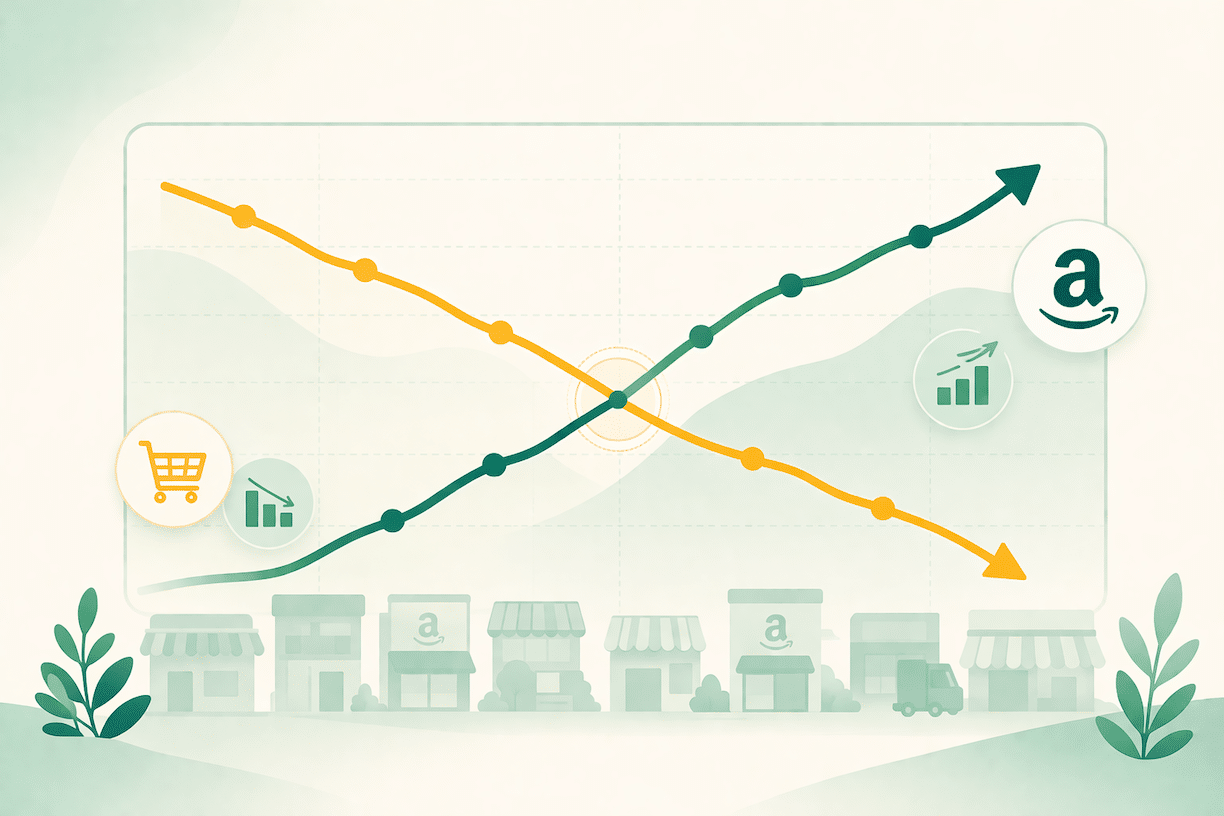

Most Amazon sellers assume that ranking well in search means being visible everywhere on the platform. It’s a reasonable assumption. It’s also increasingly wrong.

A December 2025 analysis by Mars United of more than 1,000 products compared traditional search results with Rufus recommendations for the same queries. The overlap: 22%.

That means for every five products sitting on page one of Amazon search, four of them are likely passed over when a customer asks Rufus the same question. A brand can have excellent keyword rankings, a competitive price, and strong BSR and still be largely invisible in the surface where high-intent shoppers are increasingly making their decisions.

This isn’t a bug in Rufus. It’s the whole point. Rufus is solving a different problem than the search algorithm, and it’s evaluating your listing using different signals. Treating them as the same thing is a real and growing problem for sellers.

What Rufus Is Actually Doing

Amazon Rufus launched in beta in early 2024. By Q3 2025, Amazon reported that more than 250 million customers had used it, with monthly active users up 149% year-over-year and interactions up 210%. Shoppers who engage with Rufus are 60% more likely to complete a purchase during that session. Amazon’s CEO Andy Jassy said Rufus is on track to drive $10 billion in incremental annualized sales.

Those numbers matter, but they’re not the most useful way to understand what Rufus does to your business. The useful framing is this: Rufus is a product recommendation engine built on top of your listing content, and it makes recommendations based on its ability to answer questions.

When a customer asks “what do I need to start an indoor garden?” or “what are customers saying about the durability of this backpack?”, Rufus reads your product title, bullet points, A+ Content, attribute data, customer reviews, and Q&A section. It synthesizes all of that and decides whether your product is the right answer to that question.

If your listing can answer the question clearly, Rufus recommends you. If it can’t, Rufus moves on to a competitor who can, regardless of your keyword ranking.

Ready to Start Growing Your Amazon Brand?

Canopy’s Partners Achieve an Average 84% Profit Increase!

Find out moreWhy Your SEO Work Doesn’t Transfer Automatically

Traditional Amazon SEO is built around keyword matching. The Amazon algorithm looks for your target terms in your title, bullets, and backend fields, then weights that against conversion history, velocity, and a handful of other signals. It returns a ranked list. The customer browses.

Rufus operates on different logic. It doesn’t return a ranked list. It constructs an answer. And to construct a useful answer, it needs content it can actually read, interpret, and cite.

A listing optimized purely for keyword density often fails this test. The bullet points are feature lists: “Stainless steel construction. BPA-free. Dishwasher safe.” There’s no answer to “who is this for” or “what problem does this solve” or “how does this compare to the alternative.” Backend fields are stuffed with exact-match variations but contain no natural language phrases that map to how customers actually ask questions.

That listing can rank on page one. Rufus will still route around it if a competitor’s listing gives clearer, more specific answers.

The inversion that’s happening: Rufus recommends items with an average close to 4-star ratings and thousands of detailed reviews, while products with thin content and generic positioning get passed over regardless of their search rank. Content quality is now a direct determinant of AI visibility in a way it wasn’t before.

What Rufus Evaluates

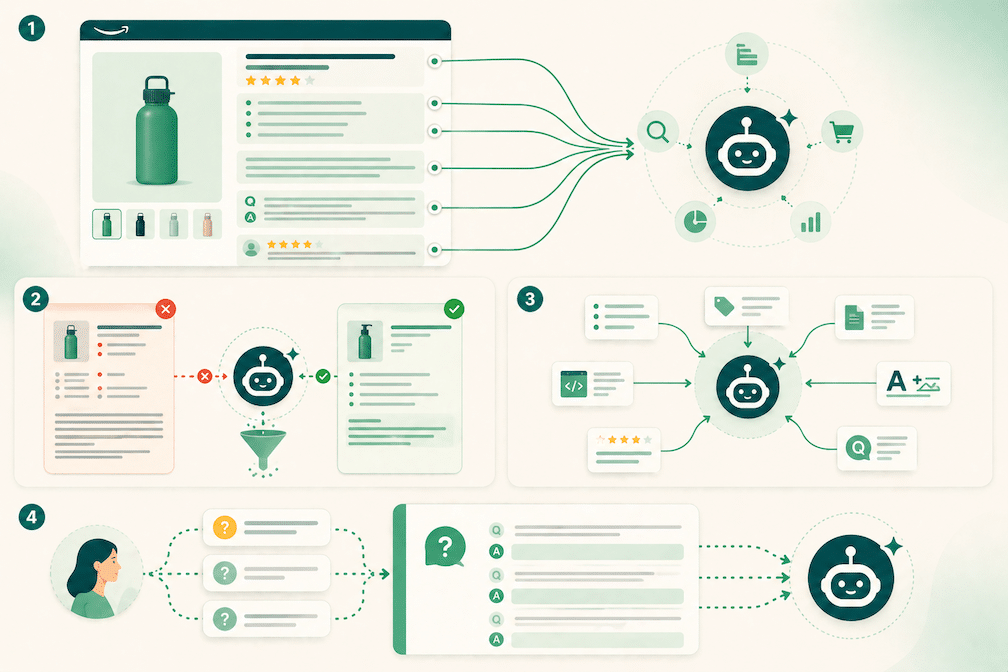

Rufus reads six distinct content sources when deciding whether and how to recommend a product.

Product attributes. Every field you fill in Seller Central, including the optional ones, is structured data Rufus uses for comparison queries. When a customer asks “what’s the difference between these two options?”, Rufus pulls from attribute data. Incomplete fields make your product invisible in comparison responses.

Listing copy. Title and bullet points need to answer questions, not just list features. Rufus is looking for use-case language: who this is for, what problem it solves, when customers use it. “Best for: laptops up to 15.6 inches” is citable. “High-quality laptop bag with multiple compartments” is not.

A+ Content. FAQ modules, comparison charts, and brand story sections are rich sources Rufus pulls from directly. The alt text on every A+ Content image also matters — Rufus reads those fields too.

Customer reviews. Rufus analyzes review sentiment to answer questions like “what do people like about this?” and “what are the common complaints?” It cites specific review content. Products with thin or generic reviews give Rufus less to work with and more reason to route to a competitor with richer feedback.

Q&A section. Rufus verifiably cites Q&A content. Proactively seeding this section with 10-15 high-intent questions and thorough answers is one of the higher-leverage optimizations available to sellers right now.

Image infographics. Rufus can extract text from infographic images using optical character recognition. Images with readable spec overlays, comparison callouts, or use-case text provide additional citable content beyond your written copy.

None of this requires starting from scratch. Most brands have a foundation. The work is filling the gaps between what your listing says and what Rufus needs to answer the questions your customers are actually asking.

Start With the Audit

The most efficient starting point is asking Rufus the questions your customers ask, then reading the answers carefully.

Navigate to one of your top ASINs and ask Rufus five things:

- “What is this product for?”

- “What do people like about this product?”

- “What don’t people like about this product?”

- “What are people buying instead of this product?”

- “Why do customers choose this product over alternatives?”

What comes back tells you how Rufus currently sees your brand. Most sellers find at least one significant gap between their intended positioning and Rufus’s actual representation of their product. Common findings: Rufus is recommending a competitor primarily because that competitor has 40 more answered Q&A questions. Or the product is being associated with a customer complaint that’s present in reviews but never addressed in the listing copy. Or Rufus can’t articulate a clear use case because the listing never provided one.

These are fixable problems. Knowing which ones exist is the work before the work.

After updating listing content, allow 24 to 48 hours for changes to propagate, then retest. The goal is to make Rufus’s answer to those five questions look like your positioning document.

The Broader Shift: GEO Beyond Amazon

Rufus is the clearest example of a larger pattern. Across all major platforms, AI systems are now answering shopping questions directly rather than returning lists of links.

According to Adobe’s 2025 survey of 5,000 US consumers, 53% now use AI tools in their shopping journey. Ecommerce traffic from AI assistants has been doubling every two months since September 2024. That traffic comes from Amazon Rufus, but also from ChatGPT, Google AI Overviews, and Perplexity — all of which are synthesizing answers to purchase-related questions from across the web.

Generative Engine Optimization (GEO) is the practice of structuring content so AI systems can read, extract, and cite it regardless of platform. The underlying logic is consistent: AI systems prefer content that directly answers specific questions, uses clear natural language, structures information in extractable units, and provides specific facts rather than broad claims.

This applies to your Amazon listings. It also applies to your brand site, your blog content, your landing pages, and any content that AI systems might encounter when a customer asks about your category.

The brands building this content architecture now are establishing a structural advantage that compounds as AI-mediated discovery becomes the default. The brands still working from a keyword-first playbook built for a different era of search are becoming progressively less visible on the surface that’s growing fastest.

Ready to Start Growing Your Amazon Brand?

Canopy’s Partners Achieve an Average 84% Profit Increase!

Find out moreThe Competitive Window

Most Amazon sellers haven’t done this work yet. That’s the window.

Rufus optimization requires specific knowledge of which content signals drive recommendations, how to structure listings so AI can extract and cite them, and how to test and iterate based on what Rufus actually says about your products. It’s operationally different from traditional listing work, and most brands are still figuring out what it even requires.

The brands capturing Rufus recommendations today share a few common traits: they have complete attribute data, they’ve structured their bullet points around use cases rather than features, they’ve built out A+ Content with FAQ and comparison modules, and they’re actively managing their Q&A sections. They don’t necessarily have better products. They have listings that give Rufus what it needs to recommend them.

Getting there starts with understanding what Rufus says about your products right now. If you haven’t asked the five diagnostic questions above, that’s the first step.

How Canopy Management Can Help

Built for Amazon. Designed for Growth

Ready to partner with a team that has the systems and expertise to scale your brand?

Canopy Management delivers end-to-end eCommerce growth, leading the industry in Amazon marketplace strategy while powering expansion through Shopify, Meta, and Google. Our full-funnel approach — from marketplace optimization to customer acquisition — has generated over $3.3 billion in partner revenue and made us the trusted growth engine for brands worldwide.

Schedule a strategy session with our team to discover exactly how our proven frameworks can accelerate your growth.

FAQ

No. Traditional keyword optimization still matters for search ranking, and those customers exist alongside Rufus users. The practical challenge is that the two approaches require different work. A listing can be well-optimized for one and significantly underperform in the other. Most brands need to run them in parallel rather than choosing between them.

Based on available research, Rufus weights content quality and completeness heavily. Mars United’s December 2025 analysis found that Rufus tends to recommend products with strong ratings (consistently above 4 stars) and substantial review volume, while products with thin content are largely excluded regardless of keyword ranking. It also actively cites Q&A content and A+ Content modules, which means those fields have direct influence on recommendation outcomes.

Run the five-question diagnostic first to understand your current positioning. Then prioritize in this order: complete all Seller Central attribute fields, rewrite bullet points to address use cases explicitly, seed the Q&A section with 10-15 common questions and thorough answers, and update A+ Content with FAQ and comparison modules. Those four steps address the most common gaps we see across accounts.

Walmart has its own AI shopping assistant (Sparky), and the same principles apply: structured content, complete attribute data, specific use-case language, and strong review profiles. The GEO layer extends further, to ChatGPT, Google AI Overviews, and any AI system that might field questions about your product category. The tactical details differ by platform, but the foundational logic is the same.

Amazon doesn’t currently provide seller-facing attribution data for Rufus-driven traffic. The proxy indicators worth tracking are organic session volume, conversion rate trends, review quality and recency, and Q&A engagement. The most direct measurement is retesting the five-question diagnostic after changes propagate to see whether Rufus’s representation of your product has improved. Seller-facing attribution data will come eventually — the brands building strong Rufus positions now will be able to measure what they built.

Thinking About Hiring an Amazon Management Agency?

Canopy’s Partners Achieve an Average 84% Profit Increase!

Let’s talk